Home

OCPSG Benchmarking LLMs is a project website for benchmark development, documentation, and reproducible evaluation workflows for Large Language Models (LLMs) and fine-tuned models applied to multilingual policy agenda annotation in parliamentary speeches.

The project is developed within the Oxford Computational Political Science Group (OCPSG), a research initiative supported by Oxford’s Department of Politics and International Relations.

What this project does

This website serves as the public entry point for the benchmark and its supporting infrastructure. It is designed to make benchmark design, model evaluation, and submission workflows more transparent, standardised, and easier to reproduce across research teams.

Pipeline overview

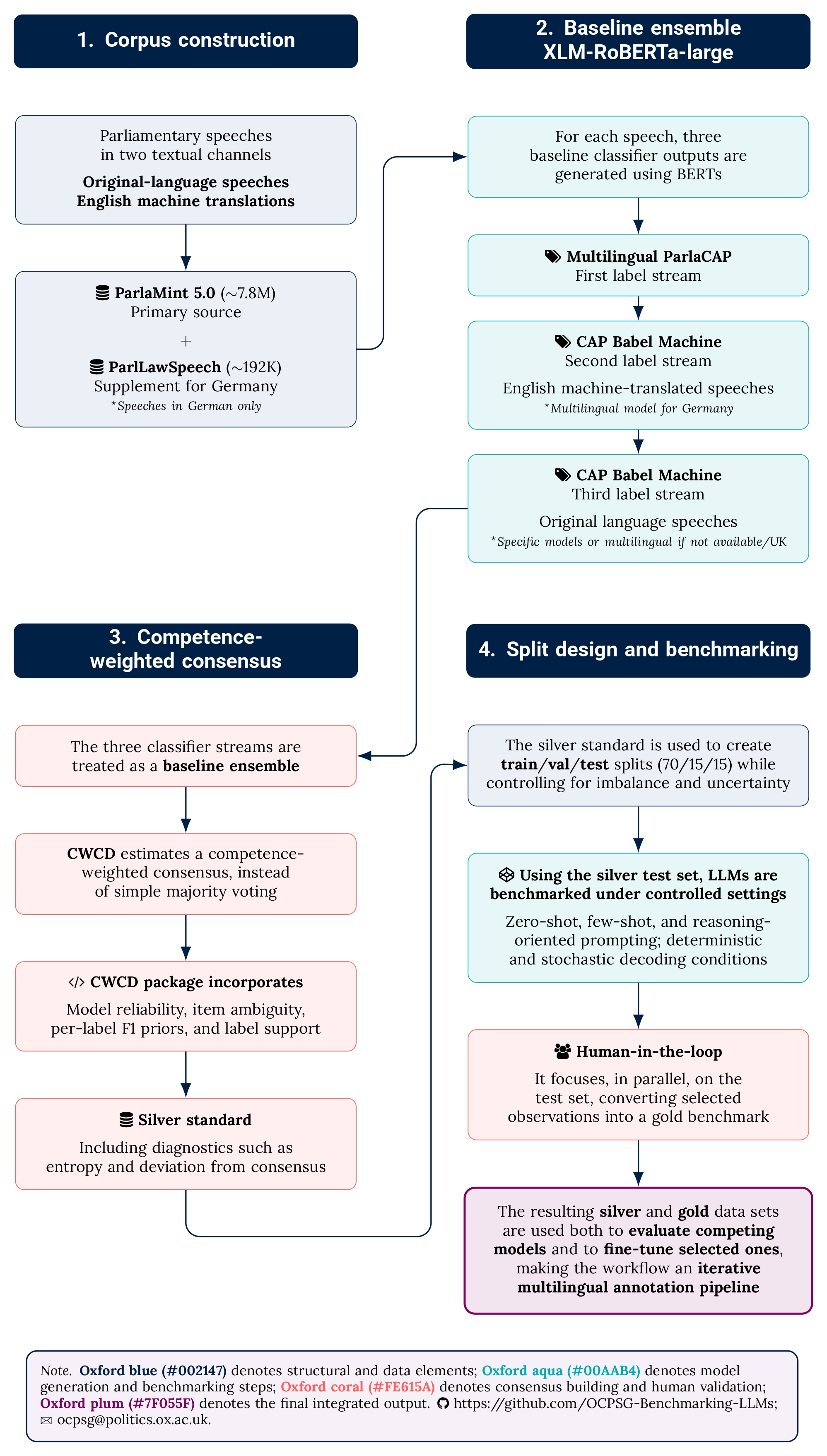

The benchmark workflow moves from multilingual parliamentary speech data to standardised evaluation outputs and documentation.

What this site provides

- multilingual benchmark datasets for parliamentary speech classification;

- standardised prediction and evaluation contracts;

- model wrappers and submission templates;

- reproducible scoring workflows and reporting conventions;

- documentation for benchmark use, outputs, and submissions.

Current focus

The current development phase is centred on benchmarking workflows for policy agenda annotation using multilingual parliamentary text. The goal is to support systematic comparison across LLMs and fine-tuned classifiers under shared evaluation conditions.

- silver-standard benchmark construction;

- multilingual parliamentary speech data;

- standardised evaluation pipelines;

- reproducible model submissions and outputs.

Explore the site

You can use this site to:

- learn about the benchmark and its scope;

- access documentation for workflows and submissions;

- browse project news and updates;

- navigate to related repositories and supporting materials.